Promoting transparency among nonprofit organizations: What donors can learn from Transparify’s experiences

Background

In 2013, the Transparify initiative began to advocate for greater funding transparency among a particular sub-set of nongovernmental organizations (NGOs), think tanks. While no universally accepted definition of the term exists, a think tank is typically a nonprofit institution that conducts research and then uses its research findings to inform and influence policy making. These functions situate think tanks at the nexus between academic research, public education, and advocacy. (For more info, see Defining the think tank label).

Think tanks can make a strong contribution to strengthening democratic processes and improving policy outcomes in fields as diverse as public health and smallholder agriculture. At the same time, there are concerns in countries both rich and poor about powerful funders’ potential ability to co-opt policy-relevant nonprofits and use them to manipulate democratic debates, policy formulation processes, and decision-making. Numerous sources have argued that funding from undisclosed sources is particularly problematic in this regard, as it leaves the public in the dark about the sponsors behind a given piece of research, policy prescription, or advocacy drive (See Think Tank Transparency , Funding , Policy Influence , and Corporate Interests ). Opaqueness about a think tank’s funding sources threatens to undermine democracy by skewing democratic deliberations and decision-making processes in line with funders’ vested interests. At the same time, it undermines the credibility of all think tanks, including those with nothing to hide.

To improve think tanks’ financial transparency, Transparify used a low cost approach combining direct engagement, quantitative ratings, and advocacy to convince dozens of think tanks in 47 countries to voluntarily publish their funding sources. By adhering to Transparify’s transparency standards, think tanks signaled their commitment to transparency and integrity in policy research and advocacy. In just four years, the initiative catalyzed a systemic shift towards transparency among think tanks in the United States and several European countries, with an increasing number of institutions achieving the top ratings of 4 and 5 stars (See How Transparent are Think Tanks about Who Funds Them 2016?).

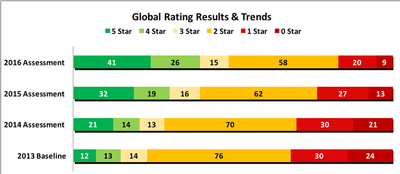

Chart 1: Shift towards transparency among think tanks engaged by Transparify worldwide, 2013-2016 (numbers of think tanks)

Source: Transparify 2016 study (5 star = highly transparent)

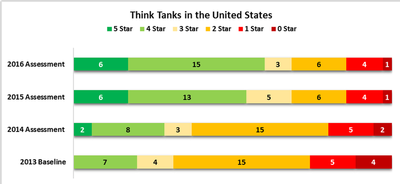

Chart 2: Shift towards transparency among United States think tanks engaged by Transparify, 2013-2016 (numbers of think tanks)

Source: Transparify 2016 study (5 star = highly transparent)

Transparify's approach: Engagement, Rating, Advocacy

Engagement

Months before launching its ratings, Transparify individually emailed the executive directors of 169 think tanks in 47 countries, explaining the aims of the initiative and the forthcoming ratings, and inviting them to place more data online over the coming weeks. Think tanks that were willing to place more information online but were unable to do so at short notice had the option of committing to ‘update’ in future, an undertaking that would be positively highlighted in the report. Crucially, Transparify invited all addressees to engage in a dialogue about the ratings process. Dozens of think tanks responded with comments and questions, in many cases leading to protracted exchanges of emails and follow-up Skype calls.

Rating

Think tanks are diverse in terms of size, organizational setup, and funding sources. They include fully state-funded institutions, organizations entirely dependent on private sector contributions, and others that combine public grants, foundation money, industry funding, membership fees, and other sources. To tackle this organizational diversity, Transparify needed to develop a rating system that was simple and objective, but at the same time able to meaningfully capture disclosure levels across this immense variety of institutions, from tiny outfits operating on a shoestring in Ukraine to established Washington D.C. research powerhouses with dozens of full-time staff.

Based on a review of existing disclosure levels on think tank websites, Transparify eventually developed a 5-star system based on an ideal type approach. Think tanks would be awarded between 0 to 5 stars according to which disclosure level their website most closely approximated.

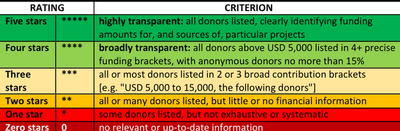

Chart 3: Transparify’s rating system

Source: Transparify website. Note that organizations may exceptionally list privacy-minded donors as “anonymous”, but in order to qualify as transparent, an organization needs to disclose the sources of over 85% of its funding volume.

Transparify has compiled a brief guide for think tanks wishing to pursue excellence in financial disclosure. We encourage institutions aspiring to five-star disclosure to contact us beforehand as Transparify may in future review and slightly modify the criteria for 5-star ratings.

To rate individual think tanks, Transparify recruited and trained a pool of university graduates. ‘citizen raters’ worked independently from each other to visit think tanks’ websites. Following a set protocol, they searched the websites for funding information and assigned each institution a rating of between 0 and 5 stars on this information via an online form. Each think tank was rated by at least two raters in order to provide a safeguard against oversights.

Where ratings diverged, this was usually because relevant information was fragmented across different pages of a think tank’s website. In such situations, Transparify’s ratings manager reviewed the rival findings and determined the correct score. When raters were unsure how a think tank’s disclosure fit into the 5-star system, they referred the question to an independent adjudicator, whose task it was to deal with borderline cases and – importantly – to ensure consistent application of the system across institutions and time.

As a final quality control step, Transparify then contacted each think tank with its rating result, giving it the opportunity to challenge its score or point out any information that may have been overlooked.

Advocacy

In the early days of the initiative, Transparify reached out to dozens of players in the wider think tank community, including transparency and anti-lobbying groups, academics studying think tanks, apex institutions, and bloggers. Transparify explained the initiative via specialist forums and platforms and furthermore invited dozens of key stakeholders to share their views and experiences on Transparify’s own blog (see in particular older blog posts). Thus, those inside the small circle of think tank experts were already familiar with the initiative and understood its aims and general approach when Transparify launched its first report, adding to the credibility of Transparify’s approach.

Compiling four annotated bibliographies (Transparency , Funding , Policy Influence , and Corporate Interests) of media stories on think tanks yielded a long list of journalists and bloggers interested in the sector. Transparify contacted these journalists in advance, in some cases sharing embargoed copies of the report. The first year’s launch was an unqualified success, as evidenced by the fact that the New York Times ran a front page story on the report.

Lessons learned: Early engagement, clear rating systems, and how to change organizational behavior

Engagement

One-on-one. Using a friendly and respectful tone, Transparify tried to reach out individually to every think tank it planned to rate. In many cases, this led to long exchanges of emails and phone calls. On occasion, Transparify provided direct technical assistance to communications or finance staff to support their disclosure efforts. The initiative’s team spent a larger amount of time to communicate with individual think tanks than on all other activities combined, but the impact of this one-on-one engagement was sufficiently strong to justify the large resource allocation.

Winners. Transparify’s aim was to increase transparency rather than to take a snapshot of opacity. The initiative created early winners by inviting think tanks to disclose more data months before the ratings began, an invitation that many of them took up. It further facilitated buy-in by creating two levels of transparency, “highly” and “broadly”, with the second one being easier to attain. Some of these think tanks later became outspoken allies of Transparify, lending their own credibility to the initiative and helping to amplify its message through their own – usually far more extensive – communication networks.

Rating system

The 5-star rating system based on ideal types worked well overall. Results on the 0-5 star scale could easily be visualized and communicated. The use of ideal types leaves each think tank free to determine how exactly it discloses information, promoting ownership and innovation. Having a highly capable adjudicator was indispensable, as there were many ambiguous and border cases that did not fall neatly within any of Transparify’s categories; the adjudicator ensured that any judgement calls were made according to criteria applied consistently over time and space. As the ratings are based on online data only, any external party, including the institution rated, can independently verify the rating result.

Ratings process. Transparify delegated the rating work to a pool of ‘citizen raters’, based on the premise that financial data should be easy to locate by any person with internet access, rather than only by expert researchers. On the upside, this cut down on costs, as most raters, with a little training, returned largely reliable and consistent results. On the downside, the system is vulnerable to data quality issues, as even two (or three) raters may overlook data tucked away in unconventional locations on institutions’ websites. In contexts where publishing an incorrect rating result could cause reputational damage, this requires verification of results with the institution rated, which increases costs. Transparify is currently rethinking its ratings process.

Advocacy

No shortcuts. Transparify had assumed that the publication of a weak rating result combined with the promise of a follow-up rating one year afterwards would in and of itself prompt a think tank to move to greater transparency, and opening the possibility of rating and cheaply ‘transparifying’ hundreds of organizations at a time. This did not happen. Transparify’s experience has been that without some form of direct engagement, very few organizations will change their behavior; a notable exception to this rule is when Transparify’s rating results are prominently reported by national media.

Top-down approaches have not so far not delivered. Transparify had originally expected key think tank donors and think tank networks to embrace the 5-star Transparify standard and eventually require all their grantees and partners to fully disclose their funding sources, not least because greater grantee transparency is arguably in many donors’ own interests. However, to date, only two small donors have begun nudging their grantees towards greater transparency (see transparent donors, opaque grantees: high time for a nudge).

Transparify’s efforts to convince Transparency International and the Fisheries International Transparency Initiative to set a positive example by requiring their network members and participating CSOs to be transparent in future have not so far been successful (see Pro-Transparency Organizations Fail to Practice What They Preach). Transparify intends to step up its advocacy with donors and networks in the future.

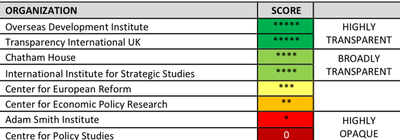

Cohorts. Think tanks primarily compare themselves to their national peers. Results tables grouped by region are not effective at stimulating behavior change: think tanks nor journalists pay much more attention to what happens within their own national borders. The most high-impact approach to date has been to select a national cohort, inform all think tanks that they will be compared to their peers, engage them intensively, and offer the carrot and stick of strong national media coverage of results. In the future, Transparify will abandon its original global scattershot approach and instead focus on transparifying one country at a time, while experimenting with sector-based cohort approaches. The charts below show how cohort comparisons look in practice, and illustrate the difference in impact that pursuing a cohort approach can make.

Chart 4: Displaying national cohort results for UK think tanks, 2016 [extract]

Source: Transparify 2016 report (truncated UK results table)

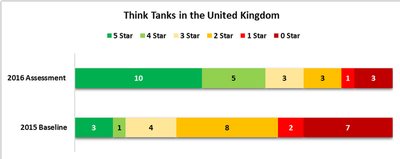

Chart 5: Dramatic UK transparency shift 2015-2016 illustrates the power of a cohort approach

Source: Transparify 2016 report. Think tanks rated with 4 or 5 stars are transparent.

Progress. Transparency has proven to be a one-way street among think tanks. Out of 169 organizations assessed in 2013, dozens have become more transparent, but only two have become more opaque. This strongly suggests that think tanks are not experiencing any drawbacks as a result of becoming more transparent.

Utility. While average transparency levels tend to be higher in the Global North, many individual think tanks in developing countries are also highly transparent, including some that are based in countries with repressive regimes and/or challenging security environments, such as Ethiopia, Pakistan and Ukraine (See How Transparent are Think Tanks about Who Funds Them 2016?). There is no correlation between a country’s political freedom score and the average transparency level of its think tanks (Forthcoming study by Dustin Gilbreath, 2016) In at least one case, a 5-star think tank has been able to use its Transparify Award to defend itself from a hostile attack by its national government.

Wider applicability

Rating system

Ideal type based assessment scales could also be employed in other transparency ratings and rankings. They are far easier to explain and publicize than complex composite indicators or compliance-based assessment approaches, and allow raters to uniformly assess highly heterogenous target populations. Additionally, they encourage institutions to think about how they can best communicate financial data to their primary stakeholders, rather than about how they can attain compliance with minimal effort, which is often the instinctive bureaucratic reaction to other scoring systems.

Target groups

Transparify’s assessment methodology can be used for every nongovernmental organization that does not exclusively rely on a multitude of tiny donors. However, the salience of funding transparency varies from one NGO to the next. Integrity concerns related to think tanks usually revolve around income transparency: where the money comes from and donors’ possible undue influence. The most salient financial question about service delivery focused NGOs is one of expenditure transparency: where the money goes to and possible diversion by management and staff. In addition to think tanks, Transparify has identified NGOs engaged in nonprofit journalism and advocacy NGOs as organizations where greater income transparency could be especially beneficial. Beyond NGOs, the rating system could also be applied to political parties, as long as the focus is on voluntary disclosure rather than legal or regulatory compliance.

See also the U4 Theme on Evaluation and Measurement

The U4 Anti-Corruption Resource Centre is part of the Chr. Michelsen Institute in Norway.